Fabric Zoning for the IBM Spectrum Virtualize and FlashSystem NPIV Feature

Zoning Basics

Before I talk about some zoning best-practices, I should explain two different types of zoning and how they work. There are two types of zoning: WWPN Zoning and Switch-Port Zoning

World-wide Port Name (WWPN) Zoning

WWPN zoning is also called "soft" zoning and is based off the WWPN that is assigned to a specific port on a fibre-channel adapter. The WWPN serves a similar function as a MAC address does on an ethernet adapter. WWPN-based zoning uses the WWPN of devices logged into the fabric to determine which device can connect to which other devices. Most fabrics are zoned using WWPN zoning. It is more flexible than switch-port zoning - a device can be plugged in anywhere on the SAN (with some caveats beyond the scope of this blog post) and the device can connect to the other devices it is zoned to. It has one distinct advantage over Switch-Port based zoning, which is that zoning can always be specified on a single WWPN level.

Switch-Port Zoning

Switch-Port Zoning is also called "hard" zoning. Zoning is done based on switch ports. When switch port zoning is used all WWPNs logged into the fabric through the switch ports in the same zone are allowed to communicate.

Problems with Switch Port Zoning and the Spectrum Virtualize/FlashSystem NPIV Feature

If you are unfamiliar with the NPIV feature in Spectrum Virtualize or the FlashSystems storage products you can read about it here.

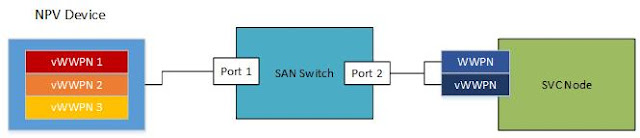

Switch Port zoning works without issue until you have multiple devices logging into the same switch port such as an NPV device (VMWare, VIO, access gateway, etc) or you enable the NPIV feature on Spectrum Virtualize. To illustrate, consider we have this simple diagram:

In the above diagram, we have an NPV device on the left with 3 virtual WWPNs (vWWPN) logged into the fabric on switch Port 1. The NPV device could be a hypervisor, or it could be an access gateway. We have an SVC Node with NPIV enabled on the right. This blog post uses SVC as an example but applies to all of the Storwize and FlashSystem products that support NPIV. The SVC node port has physical WWPN and a vWWPN logged into the fabric on switch Port 2. If we were to use Switch-Port zoning, the solid line in the next diagram is the zone as created by the SAN administrator. The dashed line is the effective zone. All the devices in the effective zone can communicate with each other. For our example, the host with vWWPN2 is not defined as a host on the SVC cluster and has no vdisks assigned to it.

This zoning can work but has the potential to cause problems. The first problem is vWWPN2 should not be zoned to the SVC, but there is no way to prevent it from connecting to both the SVC physical and virtual WWPNs, since all host WWPNs logged into the fabric on Port 1 can communicate with all node WWPNs logged into the fabric on port 2.

Another problem as long as the I/O Group the SVC Node is in is configured for transitional mode, then the host WWPNs can connect to both the physical and vWWPNs on the node, thus increasing the path count of devices attached to the cluster.

For most of the Storwize and FlashSystem products, the maximum is 512 connections per port per node. This includes nodes, controllers, and hosts but you should check the configuration limits documentation for your specific product. Using Switch-Port based zoning you are at increased risk of hitting the maximum number of fibre-channel connections on that port. If you hit the limit, then the cluster will not allow new host, controller or cluster node connections on that port. This connection count problem is made worse by vWWPN2 connecting to the SVC node port even though it is not defined as a host. You can see how having a large number of vWWPNs logged in to switch Port 1 causes the connection count to the SVC node port to rise rapidly. Running out of available connections to the cluster is the most common problem we see with using switch-port zoning and NPIV.

The third potential problem is you have far less control over balancing fibre-channel connections across node ports. In the above diagram, switch port 1 has 3 NPV devices connected, so there are 6 logins to the SVC node port. Another switch port might have a higher number. I have seen as many as 48 devices connected via NPV to a single switch port. For that situation, I would have 96 connections to each cluster node port with switch-port zoning and NPIV in transitional mode on the cluster. That is almost 1/4 of the available connections to each node port that switch port is zoned to. With switch-port zoning I do not have the ability to balance the host WWPNs across the cluster ports.

Lastly, vWWPN1 vWWPN2 and vWWPN3 can all communicate with each other. This violates the general best practice recommendation of isolating hosts to their own zone where possible. You could implement smart zoning, but that complicates your environment and is an additional step to administer and troubleshoot.

WWPN-Based Zoning

Taking our first diagram and implementing WWPN based zoning gives us the below diagram for both the implemented and effective zoning.

You can see that vWWPN1 and vWWPN3 are each zoned separately to only the vWWWPN on the SVC node. They are not zoned to the physical WWPN, and vWWPN2 is not zoned at all. This has a few advantages over switch-port zoning. First, it reduces our connection count to the node port by four. We would have 6 connections if we used Switch-Port based zoning - two connections for each host vWWPN. With WWPN zoning we have two host vWPPNs connecting only to the Node vWWPN. Second, you can balance the host connections across the SVC node ports. Third, if the I/O group is in transitional mode, zoning the hosts exclusively to the node vWWPN minimizes disruption when the NPIV mode is changed to enabled.

In Summary

While it is supported, it is recommended that you do not use the NPIV feature with Switch-Port zoning. If you choose to do so, be aware of the potential problems that can impact the health of your SAN and potentially prevent new hosts from connecting to your Spectrum Virtualize, SVC or FlashSystems cluster.

Also keep in mind, the first thing we will do when you open a support case is tell you to not use port base zoning.

ReplyDelete